Google TurboQuant: New Algorithm Shrinks AI Memory Usage by Six Times

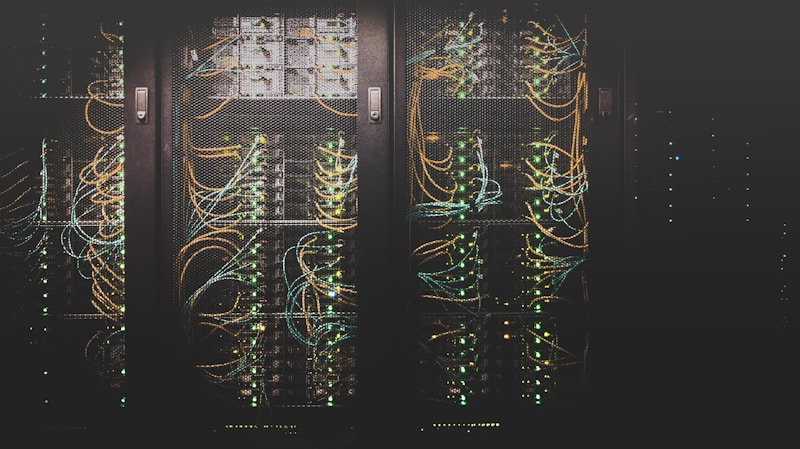

Google Research has unveiled TurboQuant, a revolutionary quantization algorithm that reduces the memory footprint of large language models by approximately six times while preserving over 99% of their original performance. The technique enables massive AI models that previously required expensive multi-GPU server configurations to run efficiently on a single consumer-grade graphics card, potentially democratizing access to cutting-edge AI capabilities for individual developers, small businesses, and researchers with limited computing budgets.

How TurboQuant Works

Traditional quantization methods reduce model precision from 16-bit or 32-bit floating point numbers to lower bit-widths like 8-bit or 4-bit integers. While effective at reducing memory usage, these approaches typically introduce noticeable quality degradation, particularly at the most aggressive compression levels. TurboQuant addresses this limitation through a technique called “adaptive mixed-precision quantization,” which analyzes the sensitivity of each layer and attention head in the model to determine the optimal bit-width for each component. Critical layers that handle complex reasoning tasks are maintained at higher precision, while less sensitive components are compressed more aggressively. The algorithm also introduces a novel calibration process that adjusts quantized weights to compensate for precision loss.

Benchmark Results and Performance Analysis

In extensive testing across multiple model families including Gemma, Llama, and Mistral, TurboQuant demonstrated remarkable consistency. A 70-billion parameter model compressed with TurboQuant achieved 99.2% of the full-precision model’s score on the MMLU benchmark while fitting into just 12 GB of GPU memory, compared to the 70+ GB required for the original model. On coding benchmarks, the quality retention was even higher at 99.6%, suggesting that the algorithm is particularly effective at preserving the structured reasoning capabilities that are critical for programming tasks. Inference speed also improved by approximately 40% due to reduced memory bandwidth requirements.

Impact on the AI Ecosystem

The release of TurboQuant as an open-source tool is expected to have far-reaching implications for the AI industry. Small and medium-sized enterprises that previously relied on expensive cloud API calls to access large language model capabilities can now deploy comparable models on their own hardware at a fraction of the cost. The education sector stands to benefit significantly, as universities and research institutions can run state-of-the-art experiments without access to expensive GPU clusters. Google has also announced integration of TurboQuant into its Vertex AI platform, allowing enterprise customers to reduce their inference costs by up to 80%.

Future Development and Community Adoption

Google Research plans to continue developing TurboQuant with support for additional model architectures and hardware platforms. The next version, expected in Q3 2026, will add support for vision-language models and audio processing systems. The open-source community has already begun contributing extensions, including optimizations for AMD GPUs and Apple Silicon processors. Industry analysts predict that TurboQuant and similar quantization advances could accelerate the trend toward on-device AI processing, reducing reliance on cloud infrastructure and improving data privacy for sensitive applications.

Create Your Own QR Code for Free — Need a custom QR code for your project, business, or personal use? Try our free QR code generator to create high-quality QR codes instantly in PNG, SVG, and more formats.